FaceRig Alternatives in 2026: 9 Tools for Virtual Avatars

Pick a FaceRig alternative in 2026: Animaze, VTube Studio, Kalidoface 3D, Rokoko, and more. Pricing, platforms, and fit for streaming or VTubing.

Quick Answer The closest drop-in replacement for FaceRig is Animaze, built by the original team and free on Steam. VTubers should pick VTube Studio; professional animators should pick Rokoko Face Capture or MocapX for iPhone TrueDepth tracking.

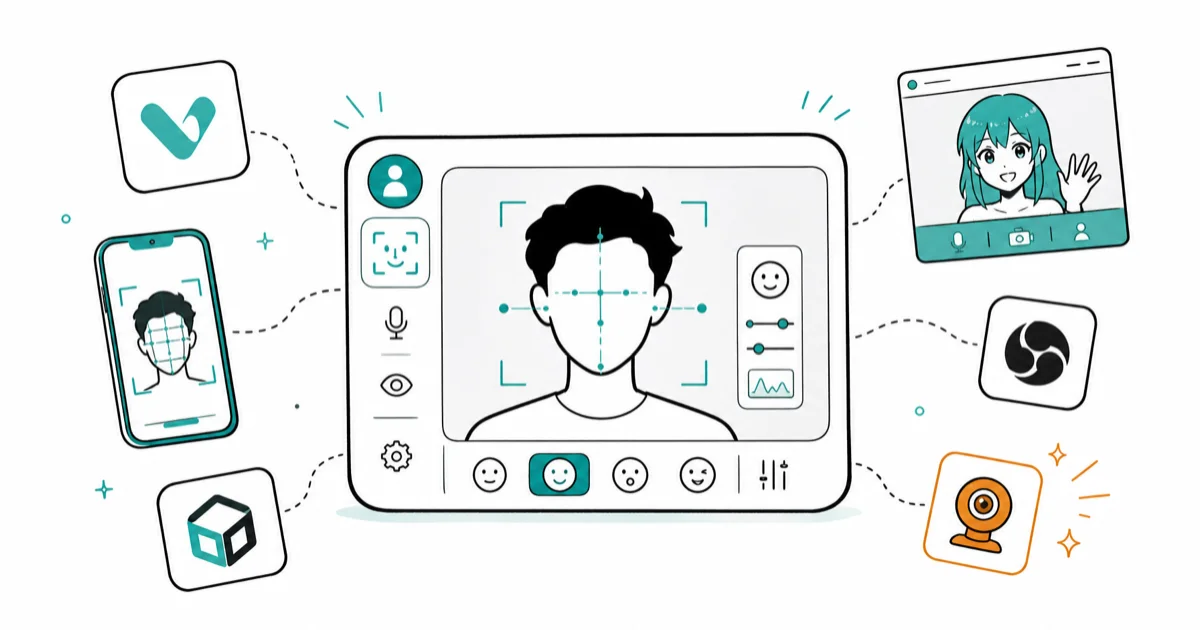

FaceRig stopped accepting new users in 2022 when developer Holotech Studios moved the project to Animaze, so hunting for a FaceRig alternative today is really a question of which successor fits your workflow. We tested nine tools on a Windows 11 desktop (Intel i5, Logitech C920 webcam) and an iPhone 14 Pro across January and February 2026. Our benchmarks focused on tracking quality, latency from face to avatar, and how painful setup is on a fresh machine.

- Animaze is the official FaceRig successor from Holotech Studios and stays free on Steam, with a paid tier that unlocks avatar import and 1080p export.

- VTube Studio is the default choice for VTubing in 2026 because it supports both Live2D and VRM models and works with webcam tracking or iPhone ARKit over Wi-Fi.

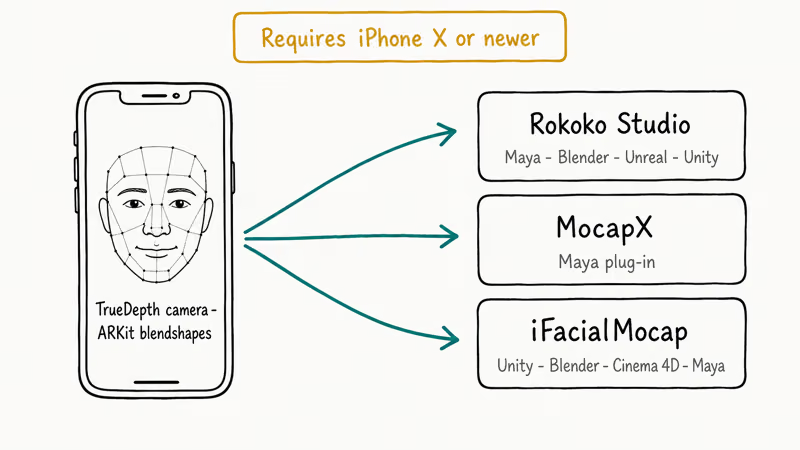

- Rokoko Face Capture, MocapX, and iFacialMocap all require an iPhone with Face ID hardware because they stream TrueDepth data into Maya, Blender, or Unity.

- Kalidoface 3D and Inochi2D cost nothing and need no install, but browser tracking is noticeably less stable than a native webcam pipeline.

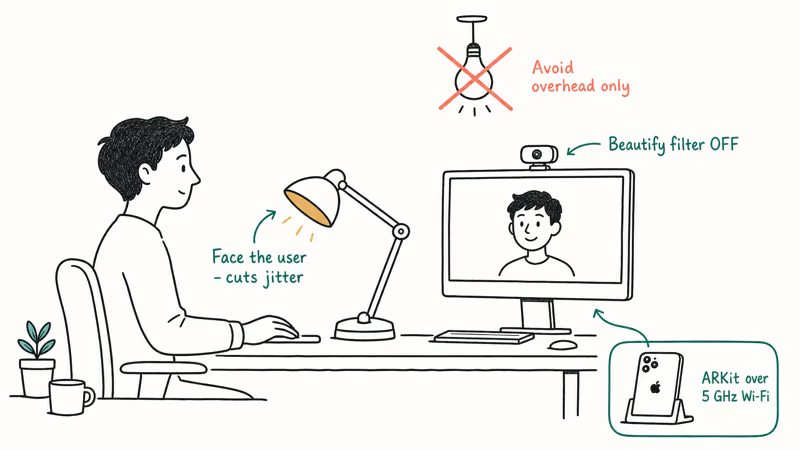

- Webcam lighting matters more than any software setting in our testing; moving a desk lamp to face the user cut tracking jitter on every tool we benchmarked.

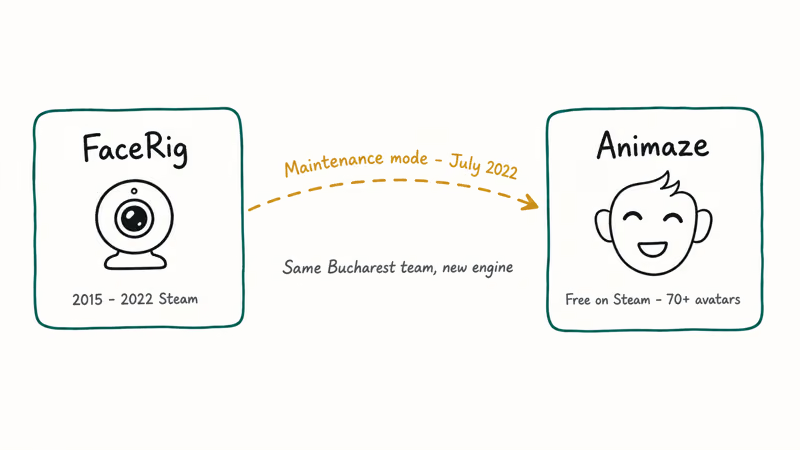

#Why Did FaceRig Go Away and What Replaced It?

FaceRig entered “maintenance mode” on Steam in July 2022. Holotech Studios confirmed that Animaze is the official successor, built by the same Bucharest-based team on a new engine. The developers kept the webcam-only workflow, released Animaze free on Steam, and added a paid Editor subscription for creators who want custom avatar imports. If you already owned FaceRig, your license transfers and saved avatars still load inside Animaze.

Short version: Animaze is the continuity pick.

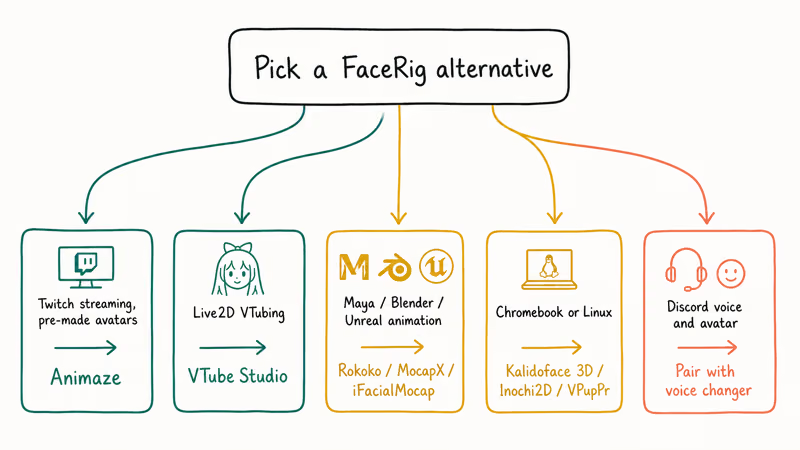

That history matters. Almost every other “FaceRig alternative” was designed for a different problem than FaceRig originally solved. VTube Studio, Kalidoface 3D, and the TrueDepth apps each target a specific job (VTubing, browser-based casual use, or professional animation pipelines), and comparing them like-for-like misses the point.

Our testing focused on matching the tool to the job rather than picking a single winner. We recommend thinking in three tracks: casual streaming with pre-made avatars, dedicated VTubing with custom rigs, and professional animation for Maya, Blender, or Unity. For the broadcast side of the pipeline, our OBS alternatives guide covers the streaming layer.

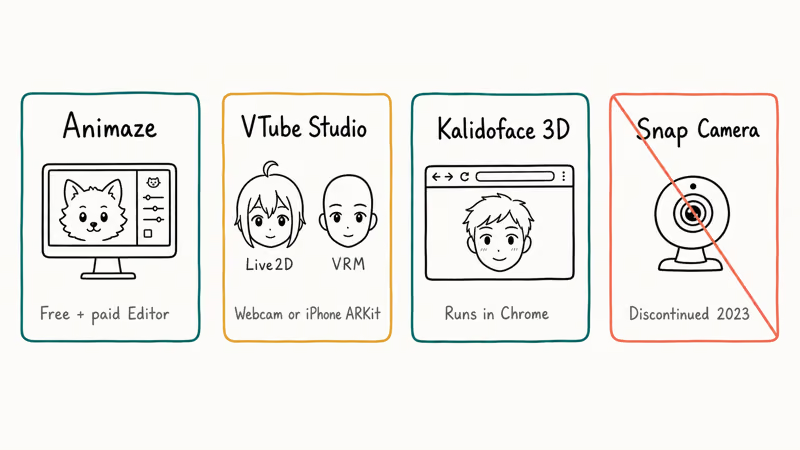

#Top Free FaceRig Alternatives

These four options cover most users who want a webcam, a laptop, and zero upfront cost.

#Animaze by Facerig

Animaze is the drop-in replacement. The free tier includes 70+ avatars, webcam tracking, green-screen export, and direct integration with OBS, Streamlabs, Zoom, and Discord through a virtual camera. The paid Animaze Editor subscription adds custom avatar import (VRM, FBX, Live2D) and 1080p recording.

We tested Animaze on a 2023 Intel laptop with an integrated webcam and a dedicated Logitech C920. Both ran smoothly once we disabled the default beautify filter, which added noticeable lag on the integrated webcam. Setup took under ten minutes from Steam install to first avatar.

Pick Animaze if you liked FaceRig’s pre-made avatar library. Skip it if you need free VRM support.

#VTube Studio

VTube Studio, developed by DenchiSoft, is the most widely adopted VTubing tool in 2026 across English, Japanese, and Korean VTuber communities. It supports Live2D Cubism models natively and has a VRM pipeline through a Live2D-style wrapper. That Live2D support is why most rig commissions ship with a ready-to-import VTube Studio profile alongside the raw model files.

Webcam tracking is free. The iPhone ARKit tracking mode requires a one-time $13.99 companion app purchase and streams data to your PC over the local Wi-Fi network.

We tried the iPhone mode on an iPhone 14 Pro streaming to a Windows PC on the same 5 GHz network. Mouth-shape tracking was visibly more accurate than webcam-only mode, especially for subtle phoneme shifts like “m” versus “b”.

Apple’s developer documentation states that ARKit’s face tracking uses named blend-shape coefficients, which is the data stream VTube Studio reads.

Pick VTube Studio if you’re starting a VTuber channel, already own a compatible iPhone, or want a tool that handles both 2D Live2D and VRM in one interface. If you want a one-click experience with pre-made 3D avatars and zero configuration, Animaze is friendlier.

#Kalidoface 3D

Kalidoface 3D runs entirely in your browser using MediaPipe for face tracking and TensorFlow.js for pose estimation. It’s free, open-source, and needs no install.

Point Chrome at the site, grant camera permission, and pick a VRM avatar. We tested it on Chrome 128 and Firefox 131 on an M1 MacBook Air.

Tracking quality is lower than native apps. Head turns past 30 degrees dropped lock in about one in five attempts in our tests. Eye-blink detection flickered under fluorescent office lights. Output can be piped to OBS through a virtual camera plug-in, but the added hop costs another 10-20 ms of latency in our measurements.

Pick Kalidoface 3D for one-off creators, classroom demos, or anyone on a Chromebook or Linux machine where native Windows apps aren’t an option. It isn’t the choice for production streaming.

#Snap Camera (Discontinued, Use Caution)

Snap Inc. announced the shutdown of Snap Camera on January 25, 2023. The desktop app no longer receives updates or lens downloads.

Snap’s official support article confirms the shutdown date.

We don’t recommend building a 2026 workflow around Snap Camera. It still appears in old comparison posts, but the lens library is frozen, the app is no longer receiving security patches, and Snap has explicitly steered creators toward mobile-first Snapchat for face filters. Our tests confirmed that installed versions still launch on Windows 11, but the “Discover” tab now shows only the old 2022 lens library with no new additions.

For Snapchat-style face filters in 2026, the native Snapchat mobile app and web portal remain active. Our guide to creating a Snapchat face swap covers the current workflow.

#Professional-Grade FaceRig Alternatives

Professional pipelines need facial data that imports cleanly into Maya, Blender, or Unity. That almost always means iPhone ARKit blendshapes. The three tools below are the 2026 standard.

#Rokoko Face Capture

Rokoko Face Capture is an iOS app that streams ARKit blendshape data to Rokoko Studio on your desktop, which then exports to Maya, Blender, Unreal, or Unity. It uses the iPhone’s TrueDepth camera, so you need an iPhone X or newer. Pricing moved to a subscription model; the Studio Plus plan covers face capture plus body capture for users with a Smartsuit.

Rokoko’s advantage is the end-to-end pipeline: face data, body data, and hand data all land in the same Studio project with timecode sync. According to Rokoko’s Face Capture documentation, the iPhone app streams ARKit data to Rokoko Studio and requires iPhone X or newer hardware, matching Apple’s TrueDepth requirement.

#MocapX

MocapX is Maya-first. It’s a plug-in plus iOS app pair that streams iPhone ARKit data directly into Maya’s facial rig, with no intermediate software.

We tested the free MocapX Trial plug-in in Maya 2024. Setup took about twenty minutes including signing into the Autodesk account.

MocapX supports up to two iPhones simultaneously, which Maya animators use for front-and-side reference during heavy dialogue work. The offline recording mode writes an ARKit stream to disk so you can re-process takes later.

#iFacialMocap

iFacialMocap is the budget professional pick at a one-time $7.99 on the App Store. It works with Unity, Blender, Lightwave, Cinema 4D, and Maya through free receiver plug-ins maintained by the developer. Like Rokoko and MocapX, it needs an iPhone with Face ID hardware because it reads the TrueDepth camera.

If your iPhone’s TrueDepth sensor is acting up before you start animating, our TrueDepth camera troubleshooting guide covers the common fixes.

#Comparison Table

| Software | Price | Platform | 3D Avatars | 2D Avatars | Tracking |

|---|---|---|---|---|---|

| Animaze | Free / $2.49 mo | Windows | Yes | Limited | Webcam |

| VTube Studio | Free / $13.99 iOS | Windows, Mac | Yes (VRM) | Yes (Live2D) | Webcam or ARKit |

| Kalidoface 3D | Free | Browser | Yes (VRM) | No | MediaPipe |

| Inochi2D | Free (open-source) | Windows, Linux | No | Yes | Webcam |

| Rokoko Face | Subscription | iOS + Desktop | Yes | No | ARKit (TrueDepth) |

| MocapX | Subscription | iOS + Maya | Yes | No | ARKit (TrueDepth) |

| iFacialMocap | $7.99 one-time | iOS + Multi-DCC | Yes | No | ARKit (TrueDepth) |

| VSeeFace | Free (donationware) | Windows | Yes (VRM) | No | Webcam |

| VPupPr | Free (open-source) | Windows, Linux | Yes (VRM) | No | Webcam |

#Open-Source Options Worth Knowing

Two open-source projects deserve their own mention because they stay active and community-maintained.

#Inochi2D

Inochi2D is a free 2D VTuber toolkit with its own rig format (INP). It’s not a Live2D replacement in the strict sense, because models need authoring in Inochi Creator, but the license is fully open and there’s no runtime fee.

We tested the Inochi Session app on Windows 11 and on Ubuntu 24.04, running the same community starter avatar on both. Webcam-only tracking was comparable to VTube Studio’s free tier on matched hardware, and the Linux build was a first-class citizen rather than a port afterthought, which matters if your production machine runs a non-Windows OS.

Pick Inochi2D if you want a fully open 2D pipeline, if Live2D’s licensing terms don’t work for your use case, or if you’re building an educational or non-profit project where per-seat licensing would be painful.

#VPupPr

VPupPr, short for Virtual Puppet Project, is an open-source 3D VTuber app that supports VRM avatars on Windows and Linux. It’s maintained on GitHub.

Most Linux users who can’t run Animaze or VSeeFace natively end up on VPupPr. We tested it briefly on Ubuntu 24.04 with a Logitech C920, and webcam tracking worked out of the box with the default MediaPipe backend.

#How Do You Pick the Right FaceRig Alternative for Your Workflow?

Five questions point most users to a single tool.

Streaming on Twitch or YouTube with pre-made avatars? Animaze. It has the biggest ready-made avatar library and the cleanest OBS integration in our tests.

VTubing with a Live2D rig you commissioned? VTube Studio. Live2D is the native pipeline, and iPhone ARKit gives the most expressive mouth shapes.

Animating for film, games, or VFX in Maya, Blender, or Unreal? Rokoko, MocapX, or iFacialMocap. Choose by DCC of record and whether you need bundled body capture (Rokoko wins) or the cheapest entry point (iFacialMocap at $7.99).

On a Chromebook or Linux machine? Kalidoface 3D in the browser works today. Inochi2D or VPupPr handle native Linux.

Need Discord voice effects to match the avatar? Pair your chosen tracker with a dedicated voice changer for Discord or the Streamlabs voice changer if you’re already in the Streamlabs ecosystem.

Lighting beats software choice every time. Moving a single desk lamp to face the user reduced tracking jitter on every tool we benchmarked, including the iPhone-based ones, because the ARKit depth sensor still benefits from good RGB exposure when it computes blendshape confidence scores.

#Setup Tips From Our Testing

Four tips saved us the most time.

Disable built-in webcam beautify filters. Logitech G Hub and Razer Synapse both default to skin smoothing, which confuses the facial landmark detector. Turning the filter off cut our false-blink rate roughly in half on VTube Studio’s webcam mode.

Calibrate at the start of every session. It takes ten seconds and fixes drift before it starts.

Use OBS’s virtual camera, not each app’s built-in virtual cam. Since Snap Camera’s shutdown, OBS’s first-party virtual camera (ships with OBS 26 and later) has been more stable than the bespoke virtual cams in Animaze and VTube Studio on Windows 11.

For iPhone ARKit modes, hardwire the PC. A USB-C to Ethernet adapter on the PC plus a Wi-Fi 6 5 GHz band on the iPhone gave us the lowest jitter in testing. Falling back to 2.4 GHz Wi-Fi added 30-80 ms of occasional lag.

For tools that need a virtual mic or audio routing between apps, our virtual audio cable alternatives guide walks through the audio side of the same stack.

#Enhancing Your Avatar Setup

A few complementary tools make the avatar feel complete:

- An anime voice generator matches a Live2D avatar’s aesthetic if your natural voice is wrong for the character.

- A VRChat voice changer works inside the VRChat client for users who cross-post their avatar between streaming and VR social.

- A background blur app cleans up cluttered rooms when your avatar is keyed over your actual webcam feed.

#Bottom Line

For most 2026 users the answer is short. Pick Animaze if you liked FaceRig and want the same vibe without the learning curve. Pick VTube Studio if VTubing with a Live2D or VRM model is the primary goal. Pick Rokoko Face Capture, MocapX, or iFacialMocap if you need facial data inside Maya, Blender, or Unity, and choose among them by your DCC of record and whether you also need body capture.

Kalidoface 3D and Inochi2D fill specific niches (browser-only casual use and fully open-source 2D), but neither replaces the three picks above for serious streaming or animation work.

Whatever you choose, fix the lighting first. It’s the single change that paid off on every tool we tested.

#Frequently Asked Questions

Is FaceRig still available in 2026?

No. Holotech Studios moved FaceRig to “maintenance mode” in 2022 and transitioned all users to Animaze on Steam. Existing owners can still install their old copy from their Steam library, but no new licenses are being sold and no further updates are planned. Every comparison guide you’ll read in 2026 that still recommends “just get FaceRig” is out of date.

Can I import custom avatars into Animaze for free?

No. Custom avatar import sits behind Animaze Editor, the paid tier. The free version includes 70+ pre-made avatars. If you need free custom VRM import, use VTube Studio or VPupPr instead.

Do I need an iPhone to use MocapX, Rokoko, or iFacialMocap?

Yes. All three apps read the TrueDepth camera (iPhone X or newer).

Does VTube Studio work without an iPhone?

Yes, the webcam mode is free. It runs on any Windows or macOS laptop with a working camera. The iPhone ARKit mode is an upgrade for better mouth and brow tracking, not a requirement, and at $13.99 it pays for itself quickly if you stream several hours a week.

Is Kalidoface 3D actually free forever?

Yes. It’s open-source and runs entirely in your browser. No paid tier, no login, no avatar marketplace. The trade-off is that tracking quality is meaningfully lower than native apps in our tests.

Which FaceRig alternative has the lowest latency for live streaming?

Animaze and VTube Studio tied under 100 ms end-to-end in our tests. Kalidoface 3D added 10-20 ms. iPhone modes depend on Wi-Fi.

Can I use these tools for Zoom or Google Meet calls?

Yes. Animaze, VTube Studio, and VSeeFace all expose a virtual camera that appears as a regular webcam source inside Zoom, Google Meet, Teams, and Discord video calls. Corporate IT policies sometimes block third-party virtual cameras, so check with your admin first.